Introduction

Search engines depend on a site’s robots.txt file to crawl it effectively and index the relevant pages. When a site is regularly updated by the technical teams, it is possible for the robots.txt file to get deleted and this could lead to a drastic fall in the SEO ranking of the site. While there are multiple SEO monitoring plugins out there, we went a step further.

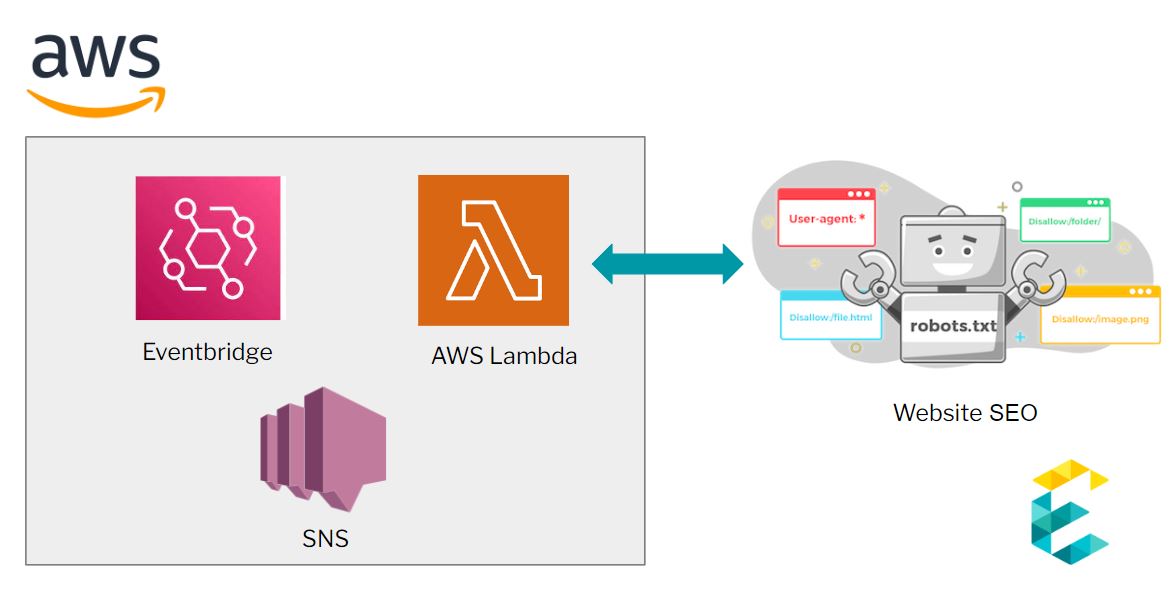

We designed and built a system that continuously monitors the site’s robots.txt file and informs the maintenance team when it breaks. This innovative system is built on Amazon Web Services (AWS) using AWS Lambda Function for automated SEO monitoring, Amazon Event Bridge for periodic checks, and Amazon SNS for notifications. In this article, we elaborate on the system's architecture and describe the workflow.

The Overall Architecture and Workflow

The main elements of the architecture are:

- AWS Lambda Function to implement the logic of looking for the robots.txt file

- Amazon Event Bridge for periodically checking

- Amazon SNS to send out alerts based on the success or failure of the Lambda function

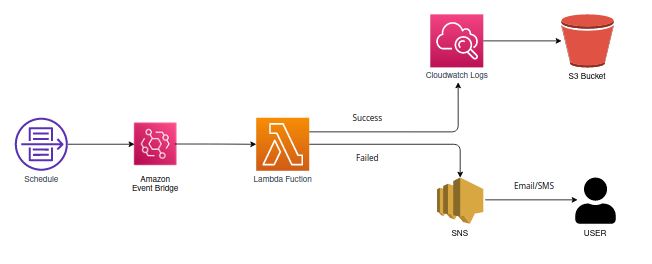

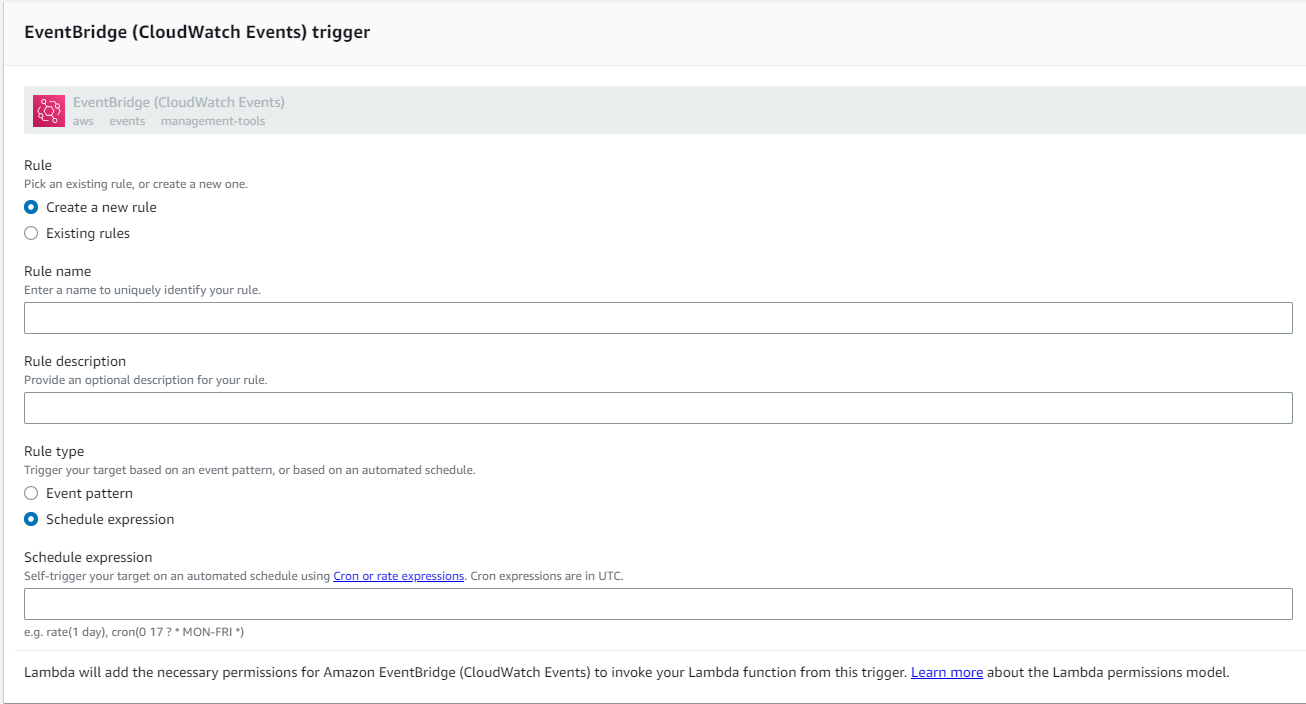

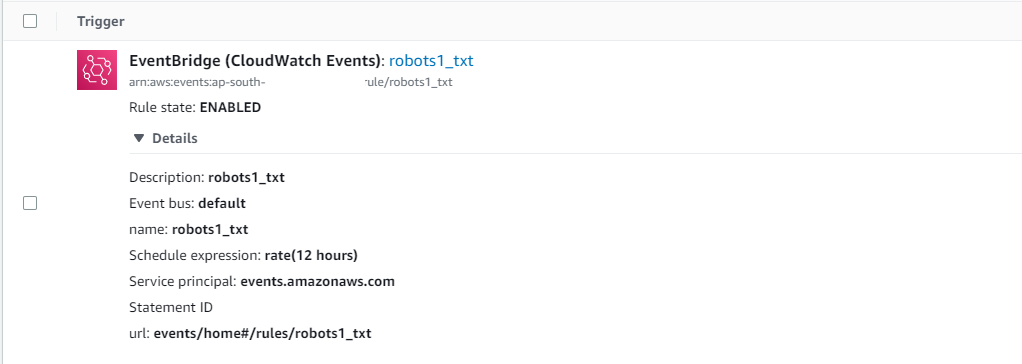

AWS EventBridge triggers a Lambda function on a predefined schedule, such as twice a day. This is achieved by creating a schedule-based rule in EventBridge. In our scenario, we configured it to trigger the Lambda function every 12 hours. This works perfectly for SEO, page speed and performance purposes.

When triggered, the Lambda function implements web scraping libraries to extract the relevant information from the HTML of the site and check for specific keywords, meta tags, and other SEO-related elements.

If it identifies an error, it uses the SNS alert management service to alert the relevant teams.

The benefits of using an AWS Lambda Function for SEO

There are several reasons why using an AWS Lambda function for SEO monitoring can be beneficial:

- Flexibility: AWS Lambda allows you to write your function in a variety of programming languages, such as Python, Node.js, Java, and C#. This means you can use the language that is most comfortable to you or that is most appropriate for your use case.

- Scalability: With AWS Lambda, your function can automatically scale up or down depending on the number of incoming requests. This means that your function can handle a high volume of requests without any additional configuration.

- Cost-effective: AWS Lambda only charges for the compute time that you consume and you don't pay for idle time, this makes it cost-effective for running long-term monitoring tasks, as you only pay for what you use.

- Robust Integration: AWS Lambda integrates with other AWS services like S3, DynamoDB, and Kinesis, this allows you to easily integrate your SEO monitoring function with other parts of your application or infrastructure.

- Reusability: Once you have created a function that can monitor your website's SEO, you can reuse it for other sites or other SEO monitoring tasks. If you’re ever relaunching your website on a new platform, the AWS Lambda function would still be relevant and usable for SEO monitoring with minimal configuration required.

Overall, using an AWS Lambda function for SEO monitoring can provide a flexible, cost-effective, and easily scalable solution for monitoring your website's SEO and identifying any issues that may need to be addressed.

Implementing Event Bridge for the AWS Lambda function for SEO Monitoring

We create a name and description for the Eventbridge rule, and give it an appropriate schedule expression.

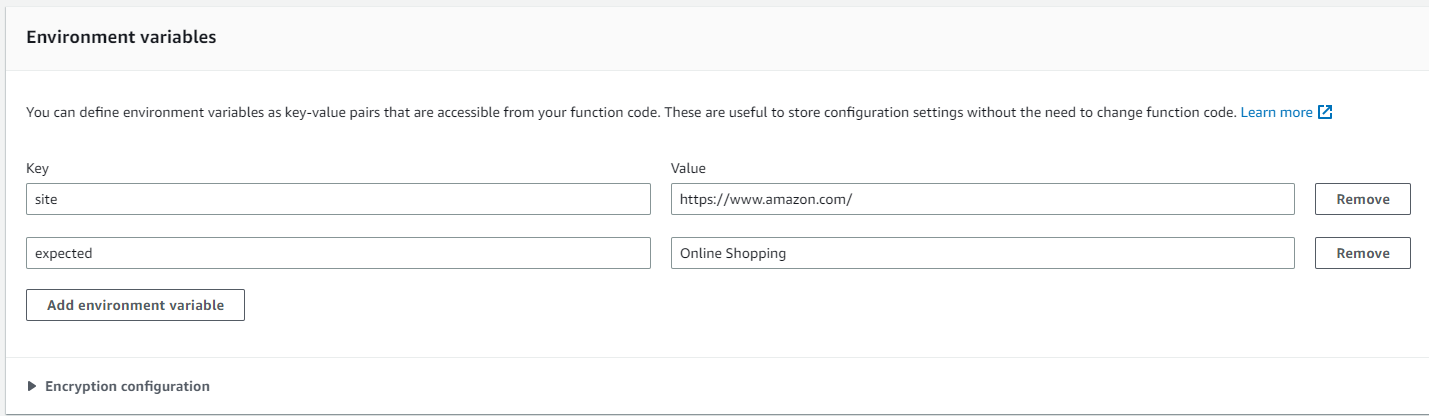

Environment variables

Add environment variables as key value pairs to provide input to the python code function and click the create button to generate the lambda function. Now you can find your newly created function in the AWS Lambda console. Click on it to verify your configurations.

Lambda function Implementation Details

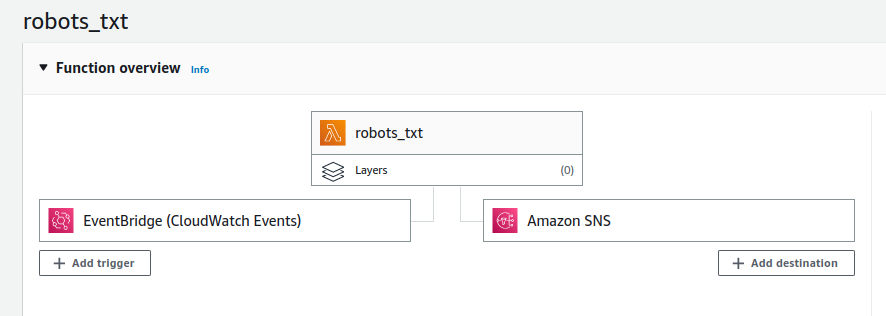

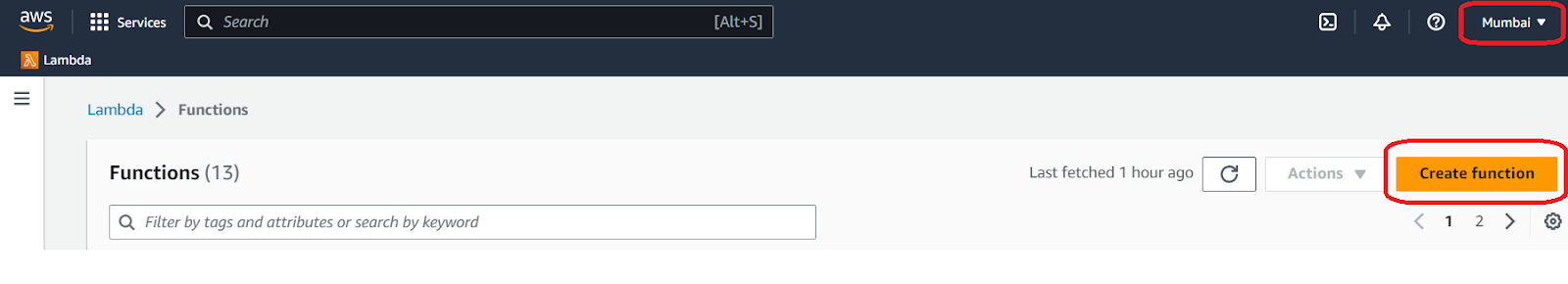

In the AWS Lambda console after selecting the appropriate region, a new Lambda function can be created easily by clicking the Create function button.

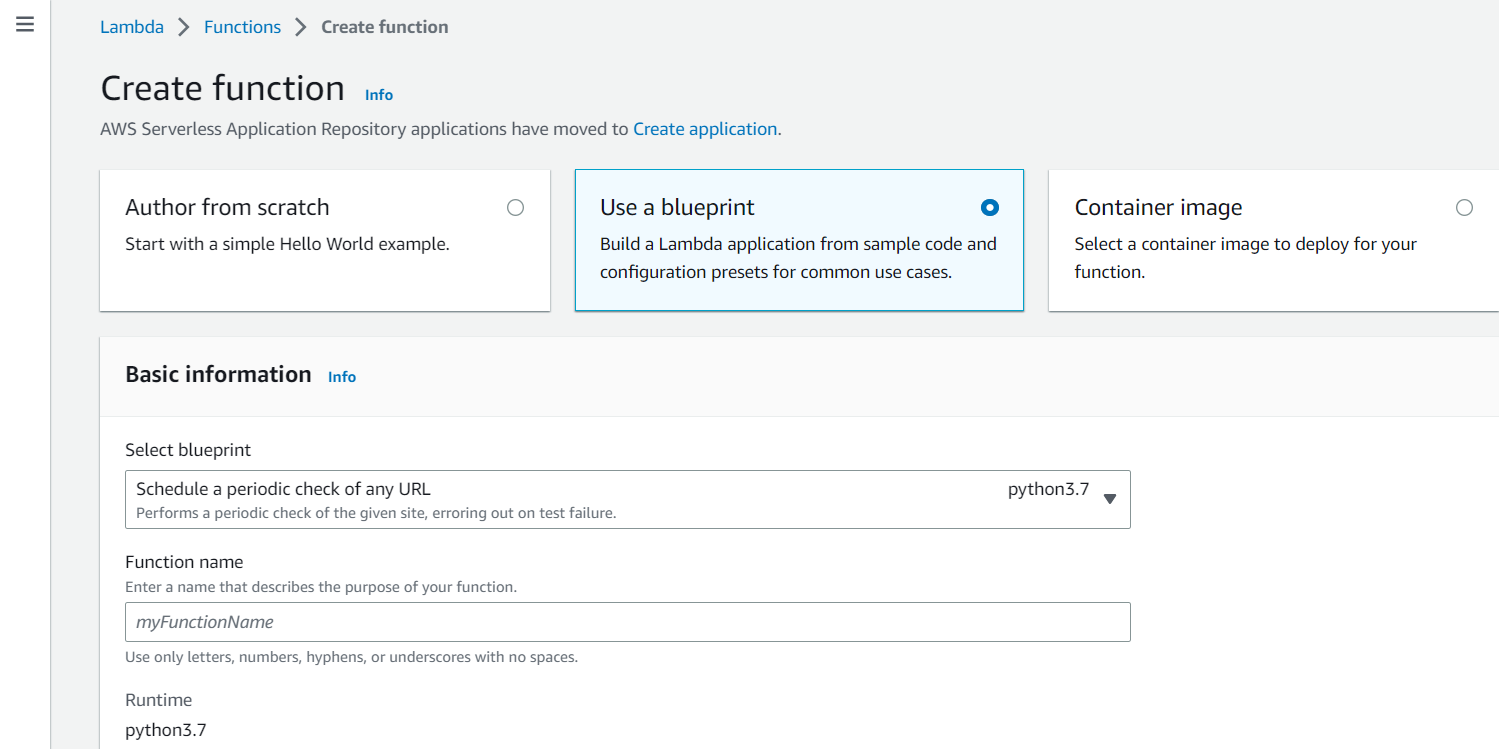

In the create function screen select “Use a blueprint” option. This Blueprint option provides out of box code and configurations presets for common use cases. These blueprints can save a significant amount of time when developing Lambda functions.

Choose “Schedule a periodic check of any URL blueprint” with runtime python 3.7 and configure the event bridge and environment variables.

In order to make an HTTP request with python, we can use the URLlib Python module. The urllib.request module defines functions and classes which help in opening URLs. It offers a simple interface, in the form of a URLOpen( ) function that is capable of fetching URLs in a variety of protocols.

Please note that some websites may block programs that try to access their information (as opposed to a regular user visiting their website). To avoid this issue, make sure to add a user-agent variable in your header code when you form your HTTP request.

def validate(res):

'''Return False to trigger the canary

Currently this simply checks whether the EXPECTED string is present.

However, you could modify this to perform any number of arbitrary

checks on the contents of SITE.

'''

return EXPECTED in res

def lambda_handler(event, context):

print('Checking {} at {}...'.format(SITE, event['time']))

try:

req = Request(SITE, headers={'User-Agent': 'AWS Lambda'})

if not validate(str(urlopen(req).read())):

raise Exception('Validation failed')

except:

print('Check failed!')

raise

else:

print('Check passed!')

return event['time']

finally:

print('Check complete at {}'.format(str(datetime.now())))

In the above code, we do the following:

- Import urlopen ( ) from urllib.request.

- Using the context manager, we make a request and receive a response with urlopen(

- Tresponse is a file object, which means we can call .read() on the response. After we read the response, we close the response object.

- The response object is then validated against the environment variable (described in the earlier section) to trigger the lambda event.

Configure Lambda function with Amazon SNS

The Simple Notification Service (SNS) is a web service that coordinates and manages the delivery of messages to subscribing endpoints or clients. When it is integrated with your Lambda function, SNS can access your function asynchronously with an event that contains a message and metadata.

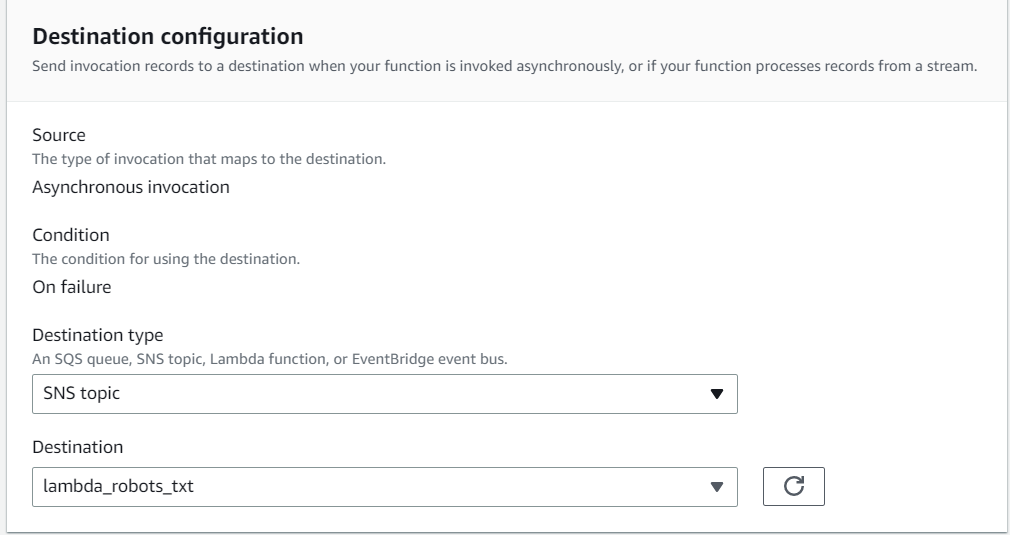

An asynchronous invocation is configured with SNS as the destination to the lambda function. Whenever the lambda function fails at the validation (described in the earlier section, the SNS services will automatically send a push notification.)

Exemplifi Website Maintenance Services

When we work with a customer as a maintenance partner for their website, we execute a broad range of activities on an ongoing basis. We provide 360° coverage over critical aspects such as

- Complete ADA compliance

- Up-to-date SEO enhancements

- Analytics Insights

- Performance Monitoring

- Security and Threat Detection

- CMS and Plugin Upgrades

- Content Fixes

- Site Backups and Disaster Recovery

- Template Changes

- Technical Training

- Uptime Monitoring.

We identify these critical issues, and implement changes as necessary to ensure the website is functioning optimally. This makes a huge difference in the short and long run and our clients' business have always prospered with our consistent, capable and prompt services.

Conclusion

SEO is one of the most important factors that helps your audience find your website organically. Given the pace of website changes these days, it is quite common for the development teams to break the main robots.txt file that drives all SEO activity on a site – they risk losing their hard earned SEO ranking and domain authority. In this post, we’ve shared a perfect automated solution ( using AWS Lambda Function ) that helps website owners easily setup a system that monitors their website's SEO backbone ( the robots.txt file ) asynchronously and provides web admins peace of mind that their bases are covered.

If you liked this insight and would like a partner to help keep your website future proof, drop us a message below.